Optical fiber

An optical fiber (or optical fibre) is a flexible, transparent fiber made by drawingglass (silica) or plastic to a diameter slightly thicker than that of a human hair.Optical fibers are used most often as a means to transmit light between the two ends of the fiber and find wide usage in fiber-optic communications, where they permit transmission over longer distances and at higher bandwidths (data rates) than wire cables. Fibers are used instead of metal wires because signals travel along them with lesser amounts of loss; in addition, fibers are also immune toelectromagnetic interference, a problem which metal wires suffer from excessively. Fibers are also used for illumination, and are wrapped in bundles so that they may be used to carry images, thus allowing viewing in confined spaces, as in the case of a fiberscope. Specially designed fibers are also used for a variety of other applications, some of them being fiber optic sensors and fiber lasers.

Optical fibers typically include a transparent core surrounded by a transparentcladding material with a lower index of refraction. Light is kept in the core by the phenomenon of total internal reflection which causes the fiber to act as awaveguide. Fibers that support many propagation paths or transverse modes are called multi-mode fibers (MMF), while those that support a single mode are calledsingle-mode fibers (SMF). Multi-mode fibers generally have a wider core diameter and are used for short-distance communication links and for applications where high power must be transmitted. Single-mode fibers are used for most communication links longer than 1,000 meters (3,300 ft).

An important aspect of a fiber optic communication is that of extension of the fiber optic cables such that the losses brought about by joining two different cables is kept to a minimum. Joining lengths of optical fiber often proves to be more complex than joining electrical wire or cable and involves the carefully cleaving of the fibers, perfect alignment of the fiber cores and the splicing of these aligned fiber cores. For applications that demand a permanent connection a mechanical splicewhich holds the ends of the fibers together mechanically could be used or a fusion splice that uses heat to fuse the ends of the fibers together could be used. Temporary or semi-permanent connections are made by means of specializedoptical fiber connectors.

The field of applied science and engineering concerned with the design and application of optical fibers is known as fiber optics.

History

Guiding of light by refraction, the principle that makes fiber optics possible, was first demonstrated by Daniel Colladon and Jacques Babinet in Paris in the early 1840s.John Tyndall included a demonstration of it in his public lectures in London, 12 years later. Tyndall also wrote about the property of total internal reflection in an introductory book about the nature of light in 1870:

Unpigmented human hairs have also been shown to act as an optical fiber.

Practical applications, such as close internal illumination during dentistry, appeared early in the twentieth century. Image transmission through tubes was demonstrated independently by the radio experimenter Clarence Hansell and the television pioneer John Logie Baird in the 1920s. The principle was first used for internal medical examinations by Heinrich Lamm in the following decade. Modern optical fibers, where the glass fiber is coated with a transparent cladding to offer a more suitable refractive index, appeared later in the decade. Development then focused on fiber bundles for image transmission. Harold Hopkins and Narinder Singh Kapany at Imperial College in London achieved low-loss light transmission through a 75 cm long bundle which combined several thousand fibers. Their article titled "A flexible fibrescope, using static scanning" was published in the journal Nature in 1954. The first fiber optic semi-flexible gastroscope was patented by Basil Hirschowitz, C. Wilbur Peters, and Lawrence E. Curtiss, researchers at theUniversity of Michigan, in 1956. In the process of developing the gastroscope, Curtiss produced the first glass-clad fibers; previous optical fibers had relied on air or impractical oils and waxes as the low-index cladding material.

A variety of other image transmission applications soon followed.

In 1880 Alexander Graham Bell and Sumner Tainter invented the Photophone at the Volta Laboratory in Washington, D.C., to transmit voice signals over an optical beam. It was an advanced form of telecommunications, but subject to atmospheric interferences and impractical until the secure transport of light that would be offered by fiber-optical systems. In the late 19th and early 20th centuries, light was guided through bent glass rods to illuminate body cavities. Jun-ichi Nishizawa, a Japanese scientist at Tohoku University, also proposed the use of optical fibers for communications in 1963, as stated in his book published in 2004 in India. Nishizawa invented other technologies that contributed to the development of optical fiber communications, such as the graded-index optical fiber as a channel for transmitting light from semiconductor lasers. The first working fiber-optical data transmission system was demonstrated by German physicistManfred Börner at Telefunken Research Labs in Ulm in 1965, which was followed by the first patent application for this technology in 1966. Charles K. Kao and George A. Hockham of the British company Standard Telephones and Cables(STC) were the first to promote the idea that the attenuation in optical fibers could be reduced below 20 decibels per kilometer (dB/km), making fibers a practical communication medium. They proposed that the attenuation in fibers available at the time was caused by impurities that could be removed, rather than by fundamental physical effects such as scattering. They correctly and systematically theorized the light-loss properties for optical fiber, and pointed out the right material to use for such fibers — silica glass with high purity. This discovery earned Kao the Nobel Prize in Physics in 2009.

NASA used fiber optics in the television cameras that were sent to the moon. At the time, the use in the cameras was classified confidential, and only those with sufficient security clearance or those accompanied by someone with the right security clearance were permitted to handle the cameras.

The crucial attenuation limit of 20 dB/km was first achieved in 1970, by researchers Robert D. Maurer, Donald Keck, Peter C. Schultz, and Frank Zimar working for American glass maker Corning Glass Works, now Corning Incorporated. They demonstrated a fiber with 17 dB/km attenuation by doping silica glass with titanium. A few years later they produced a fiber with only 4 dB/km attenuation using germanium dioxide as the core dopant. Such low attenuation ushered in the era of optical fiber telecommunication. In 1981, General Electric produced fused quartz ingots that could be drawn into strands 25 miles (40 km) long.

Attenuation in modern optical cables is far less than in electrical copper cables, leading to long-haul fiber connections with repeater distances of 70–150 kilometers (43–93 mi). The erbium-doped fiber amplifier, which reduced the cost of long-distance fiber systems by reducing or eliminating optical-electrical-optical repeaters, was co-developed by teams led byDavid N. Payne of the University of Southampton and Emmanuel Desurvire at Bell Labs in 1986. Robust modern optical fiber uses glass for both core and sheath, and is therefore less prone to aging. It was invented by Gerhard Bernsee ofSchott Glass in Germany in 1973.

The emerging field of photonic crystals led to the development in 1991 of photonic-crystal fiber,which guides light bydiffraction from a periodic structure, rather than by total internal reflection. The first photonic crystal fibers became commercially available in 2000. Photonic crystal fibers can carry higher power than conventional fibers and their wavelength-dependent properties can be manipulated to improve performance.

Uses

Communication

Main article: Fiber-optic communication

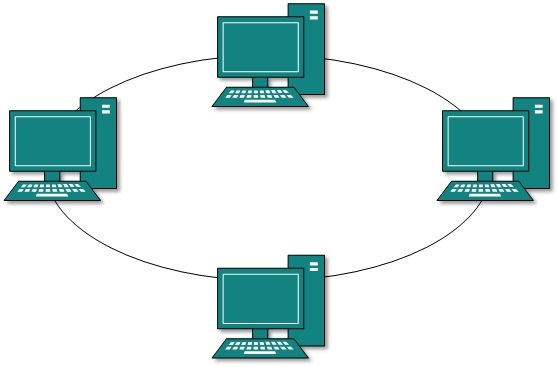

Optical fiber can be used as a medium for telecommunication and computer networking because it is flexible and can be bundled as cables. It is especially advantageous for long-distance communications, because light propagates through the fiber with little attenuation compared to electrical cables. This allows long distances to be spanned with few repeaters.

The per-channel light signals propagating in the fiber have been modulated at rates as high as 111 gigabits per second(Gbit/s) by NTT, although 10 or 40 Gbit/s is typical in deployed systems. In June 2013, researchers demonstrated transmission of 400 Gbit/s over a single channel using 4-mode orbital angular momentum multiplexing.

Each fiber can carry many independent channels, each using a different wavelength of light (wavelength-division multiplexing (WDM)). The net data rate (data rate without overhead bytes) per fiber is the per-channel data rate reduced by the FEC overhead, multiplied by the number of channels (usually up to eighty in commercial dense WDM systems as of 2008). As of 2011 the record for bandwidth on a single core was 101 Tbit/s (370 channels at 273 Gbit/s each). The record for a multi-core fiber as of January 2013 was 1.05 petabits per second. In 2009, Bell Labs broke the 100 (petabit per second)×kilometer barrier (15.5 Tbit/s over a single 7,000 km fiber).

For short distance application, such as a network in an office building, fiber-optic cabling can save space in cable ducts. This is because a single fiber can carry much more data than electrical cables such as standard category 5 Ethernet cabling, which typically runs at 100 Mbit/s or 1 Gbit/s speeds. Fiber is also immune to electrical interference; there is no cross-talk between signals in different cables, and no pickup of environmental noise. Non-armored fiber cables do not conduct electricity, which makes fiber a good solution for protecting communications equipment in high voltageenvironments, such as power generation facilities, or metal communication structures prone to lightning strikes. They can also be used in environments where explosive fumes are present, without danger of ignition. Wiretapping (in this case, fiber tapping) is more difficult compared to electrical connections, and there are concentric dual-core fibers that are said to be tap-proof.

Fibers are often also used for short-distance connections between devices. For example, most high-definition televisionsoffer a digital audio optical connection. This allows the streaming of audio over light, using the TOSLINK protocol.

Advantages over copper wiring

The advantages of optical fiber communication with respect to copper wire systems are:

- Broad bandwidth

- A single optical fiber can carry 3,000,000 full-duplex voice calls or 90,000 TV channels.

- Immunity to electromagnetic interference

- Light transmission through optical fibers is unaffected by other electromagnetic radiation nearby. The optical fiber is electrically non-conductive, so it does not act as an antenna to pick up electromagnetic signals. Information traveling inside the optical fiber is immune to electromagnetic interference, even electromagnetic pulses generated by nuclear devices.

- Low attenuation loss over long distances

- Attenuation loss can be as low as 0.2 dB/km in optical fiber cables, allowing transmission over long distances without the need for repeaters.

- Electrical insulator

- Optical fibers do not conduct electricity, preventing problems with ground loops and conduction of lightning. Optical fibers can be strung on poles alongside high voltage power cables.

- Material cost and theft prevention

- Conventional cable systems use large amounts of copper. In some places, this copper is a target for theft due to its value on the scrap market.

Sensors

Main article: Fiber optic sensor

Fibers have many uses in remote sensing. In some applications, the sensor is itself an optical fiber. In other cases, fiber is used to connect a non-fiberoptic sensor to a measurement system. Depending on the application, fiber may be used because of its small size, or the fact that no electrical power is needed at the remote location, or because many sensors can be multiplexed along the length of a fiber by using different wavelengths of light for each sensor, or by sensing the time delay as light passes along the fiber through each sensor. Time delay can be determined using a device such as an optical time-domain reflectometer.

Optical fibers can be used as sensors to measure strain, temperature, pressure and other quantities by modifying a fiber so that the property to measure modulates the intensity, phase, polarization, wavelength, or transit time of light in the fiber. Sensors that vary the intensity of light are the simplest, since only a simple source and detector are required. A particularly useful feature of such fiber optic sensors is that they can, if required, provide distributed sensing over distances of up to one meter. In contrast, highly localized measurements can be provided by integrating miniaturized sensing elements with the tip of the fiber. These can be implemented by various micro- and nanofabrication technologies, such that they do not exceed the microscopic boundary of the fiber tip, allowing such applications as insertion into blood vessels via hypodermic needle.

Extrinsic fiber optic sensors use an optical fiber cable, normally a multi-mode one, to transmit modulated light from either a non-fiber optical sensor—or an electronic sensor connected to an optical transmitter. A major benefit of extrinsic sensors is their ability to reach otherwise inaccessible places. An example is the measurement of temperature inside aircraft jet engines by using a fiber to transmit radiation into a radiation pyrometer outside the engine. Extrinsic sensors can be used in the same way to measure the internal temperature of electrical transformers, where the extreme electromagnetic fieldspresent make other measurement techniques impossible. Extrinsic sensors measure vibration, rotation, displacement, velocity, acceleration, torque, and twisting. A solid state version of the gyroscope, using the interference of light, has been developed. The fiber optic gyroscope (FOG) has no moving parts, and exploits the Sagnac effect to detect mechanical rotation.

Common uses for fiber optic sensors includes advanced intrusion detection security systems. The light is transmitted along a fiber optic sensor cable placed on a fence, pipeline, or communication cabling, and the returned signal is monitored and analyzed for disturbances. This return signal is digitally processed to detect disturbances and trip an alarm if an intrusion has occurred.

Power transmission

Optical fiber can be used to transmit power using a photovoltaic cell to convert the light into electricity. While this method of power transmission is not as efficient as conventional ones, it is especially useful in situations where it is desirable not to have a metallic conductor as in the case of use near MRI machines, which produce strong magnetic fields. Other examples are for powering electronics in high-powered antenna elements and measurement devices used in high-voltage transmission equipment.